Yesterday in a knowledge session between Solution Architects, the topic of AWS Elastic File System was raised and after a short discussion it was decided to take a closer look and set something up. To quote Top Gear, how hard could it be?

What is EFS?

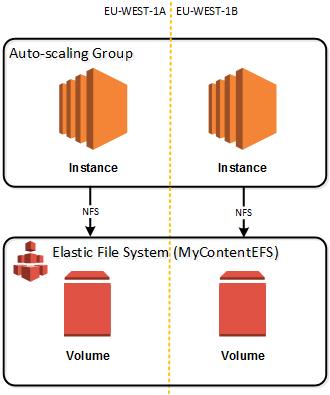

AWS Elastic File System, or EFS, is Amazon Web Services’ latest storage solution and is a fully managed, simple and scalable file storage to use with EC2 instances. As the name suggests, it grows and shrinks automatically with your storage needs and EC2 instances can access EFS using NFS (v4.1), over multiple availability zones at low latency with high throughput (50 MB/s per TB with 100 MB/s burst). AWS lists the use cases of EFS to be; Big Data and analytics, media processing workflows, content management, web serving and home directories. Content Management you say? Hmmm J

From my past, scalable single sources of file system based content were expensive and difficult to deploy. So much so, that product and implementations strategy meant that putting all content in a database was by far and away the most logical route to take. So could EFS now resolve that headache? I will give it a test to find out.

What do I have to set up?

So I will simulate a website setup where I have an application server tier that would host my Tomcat (or similar) application servers and a back end file system which will be mounted as to my application servers so that the files can be used. Onto my file system I will deploy my content. I won’t install or configure Tomcat, this is simple to do but covered very well in other places.

So, I will need

- An auto-scaling group covering two availability zones (eu-west-1a, eu-west-1b) with two instances of Amazon Linux (no Tomcat, no auto-scaling rules for now)

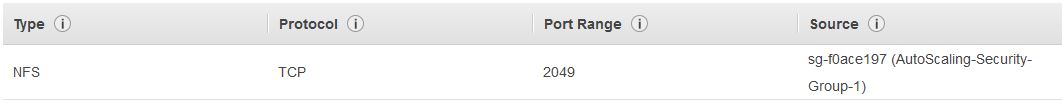

- Security Group to allow my auto-scaling instances to talk NFS to my EFS

- An EFS created and mounted to my instances

For my auto-scaling group, I have gone and created a simple one and it is up and running across my two availability zones. I have gone and terminated an instance or two just for fun. That’s not related to this post, it is just fun to terminate something and watch it auto-magically reappear.

My security group allows instances that are a member of my auto-scaling security group, access to the EFS volumes via the NFS protocol

I can now create my EFS for my website content.

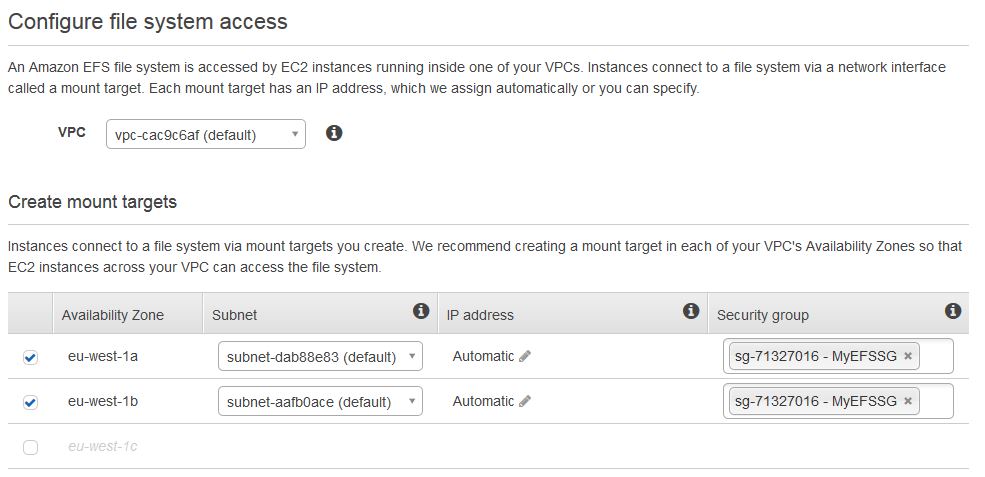

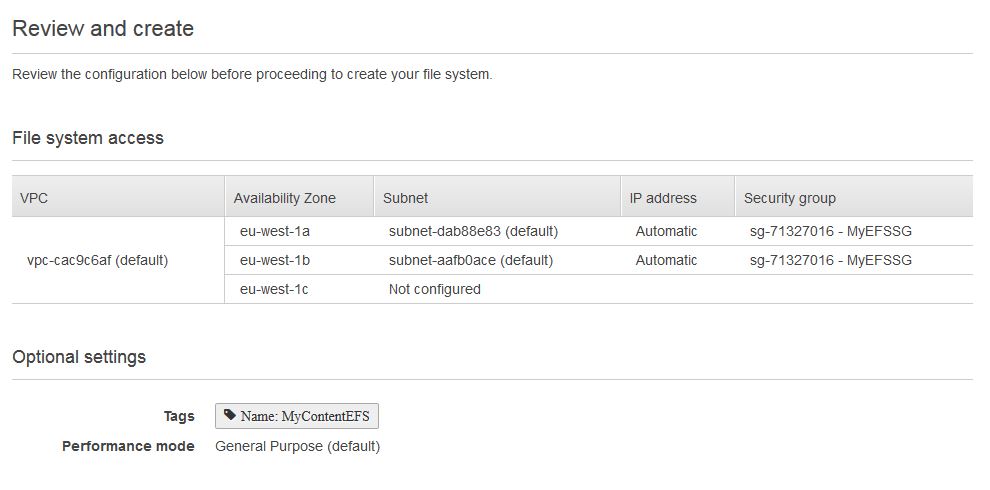

I first need to configure the file system access which consists of my VPC, my mount targets (availability zones) and the security group that defines the source of access requests (the one I created early):

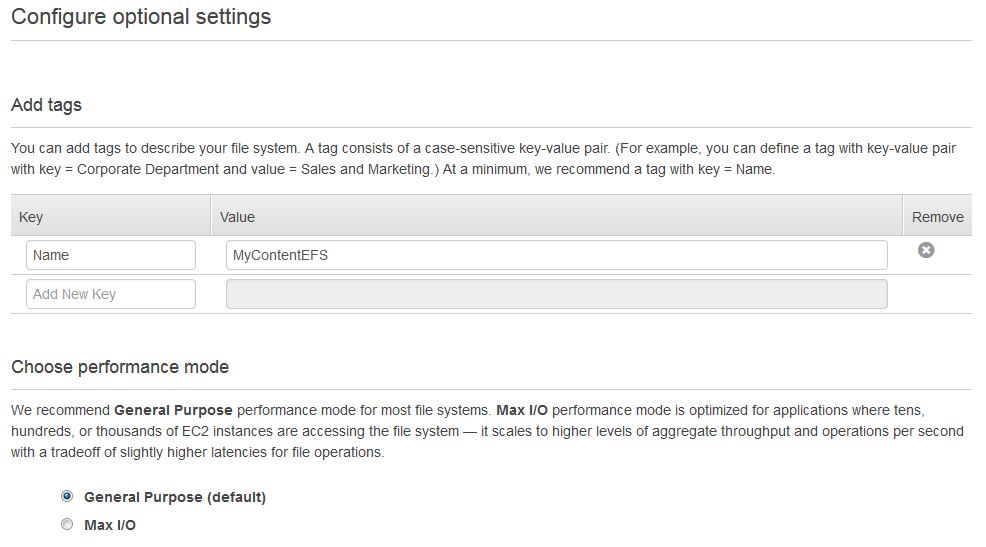

Then I configure the optional settings. I have chosen to give it a friendly name and stuck to the default “Performance Mode” of general purpose.

The final review step and then I am done. That was it. No configuring disk sizes, difficult calculations on my requirements of how much content I have. It’s done.

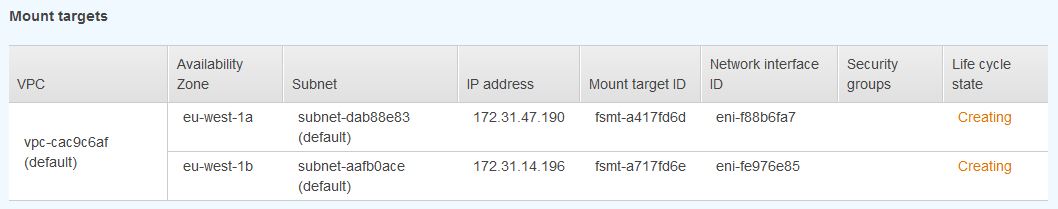

After a shirt whole my volumes are ready and I can keep track on the status of creation in the main EFS dashboard under “life cycle state”.

Next we are going to test drive mounting my volume to my instance. EFS provides some instructions to be able to do this from the dashboard. Running in a ssh session (from the root);

Step 1: If needed, install the NFS client on your EC2 instance

sudo yum install -y nfs-utils

Step 2: Create a new directory on your EC2 instance, such as “efs”

sudo mkdir efs

Step 3: Mount your file system using the DNS name.

sudo mount -t nfs4 -o nfsvers=4.1 $(curl -s http://169.254.169.254/latest/meta-data/placement/availability-zone).fs-d3658b1a.efs.eu-west-1.amazonaws.com:/ efs

Once that is done I can switch to the directory and create myself a simple index.html file for my eventual Tomcat server to see. If I then log on to my other instance, I can see that my file has been replicate from the first availability zone to the next. This means, if I would write my content to disk as I have done, it would be available instantly in the other availability zones and all my sites would be updated.

As I did this manually, if my auto-scaling group scales then I would need to do this each time. This defeats the purpose of auto-scaling. However, if I mount this directory at instance initialization time (e.g. chef) then it would be mounted when my new instance starts. To test this I made a very simply launch script and updated my Launch configuration (made a new one as edits are not possible) to add the following to the user data portion of the configuration.

#!/bin/bash cd / sudo mkdir efs sudo mount -t nfs4 -o nfsvers=4.1 $(curl -s http://169.254.169.254/latest/meta-data/placement/availability-zone).fs-d3658b1a.efs.eu-west-1.amazonaws.com:/ efs

Warning: I would not use this code in production. No really, please don’t.

Summary

The most complicated thing about this is to mount the drives as creation of the fully managed and scalable storage is incredibly easy. For content management systems, like SDL Web (Tridion) this is a real help in deployment of content in a scalable and reliable way.

Hi Julian,

It is a very useful post. I am new in AWS and I want to ask you for how to install a Drupal in this EFS file system. After that, I want to create an AMI in order to create an autoscaling group.

Would you mind to help me?

Hi Sergio, Unfortunately, I do not have specific knowledge on Drupal installations, to say what the best practice approach is for this specific software. Creating an AMI is a straightforward process. Ideally you just use a pre-built supported AMI, but if you need something custom you will need to build the instance as you need it, then create an AMI from that instance. See here for more information: https://docs.aws.amazon.com/toolkit-for-visual-studio/latest/user-guide/tkv-create-ami-from-instance.html.